|

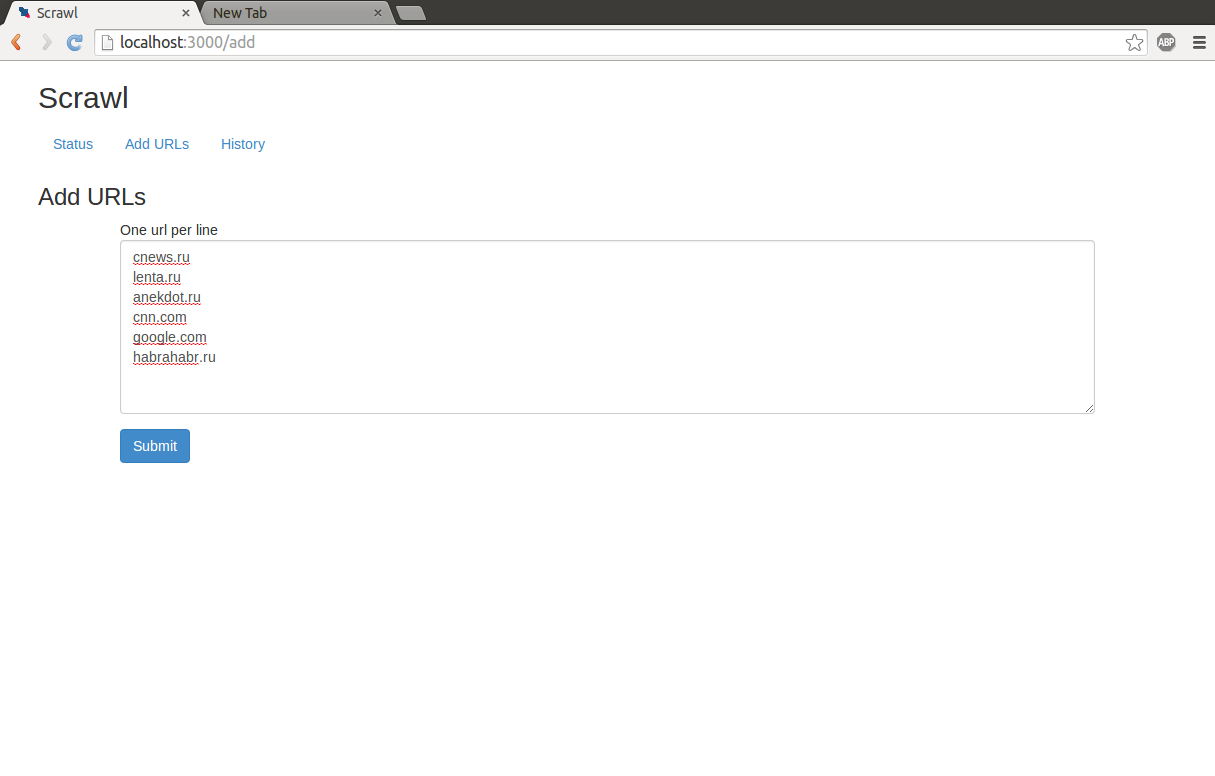

The example code is an skeleton for a spider that crawls an e-commerce site following the links of product categories and subcategories, to get links for each of the product pages.įor the rules registered specifically in this spider, it would crawl the links inside the lists of "categories" and "subcategories" with the parse() method as callback (which will trigger the crawl rules to be called for these pages), and the links matching the regular expression product.php?id=\d+ with the callback parse_product_page() - which would finally scrape the product data.Īs you can see, pretty powerful stuff. Namely, it calls the LinkExtractor.extract_links(response) for each response object to get the urls, and then yields scrapy.Request(url, ) objects. The engine starts sending requests to the urls in start_urls and executing the default callback (the parse() method in CrawlSpider) for their response.įor each response, the parse() method will execute the link extractors on it to get the links from the page. mark, paint, or scrawl upon, or in any other manner deface any private property of value. That any instrument of writing, to which the maker shall affix a scrawl by way of seal.

Rules = parse_product_page(self, response): Recent laws may not yet be included in the ILCS database. Example:įor example, say you have a CrawlSpider like so: from import CrawlSpider, Ruleįrom import LinkExtractor It basically searches for all the s and s elements in the whole page or only in the elements obtained after applying the restrict_xpaths expressions if the attribute is set. The extraction of the links (that may or may not use restrict_xpaths) is done by the LinkExtractor object registered for that rule. You can see loads of great fan made Fighting Fantasy books at the wonderful. This gamebook is based on a short Fighting Fantasy book I wrote. So, whenever you want to trigger the rules for an URL, you just need to yield a scrapy.Request(url, self.parse), and the Scrapy engine will send a request to that URL and apply the rules to the response. SCRAWL is a simple solo fantasy RPG which you can get here (Pay What You Want) To get more SCRAWL products, keep checking my page on Drive Thru RPG. By using these rules, you can: Prevent content within a particular path from being crawled. Crawl rules provide you with the ability to set the behavior of the Enterprise Search index engine when you want to crawl content from a particular path.

They are handled by the default parse() method implemented in that class - look here to read the source. You can use crawler impact rules to modify loads placed on sites when you crawl them. Relax and enjoy your drink- you've earned it.The rules attribute for a CrawlSpider specify how to extract the links from a page and which callbacks should be called for those links. You've succeeded in the (not so) ancient Irish tradition of the 12 Pubs of Christmas. At least it's intentional this time.Ĭongratulations lads, you've made it. You can guess- get up on that dance floor and make an absolute fool of yourself. Basic Game Rules Buy Scrawl Videos Links Other Games Scrawl Fan Site Official Site: Official Scrawl website Created by: (Uncredited), Zoe Lee Published by: Big Potato, Mercurio Description: Everyone has a dirty mind. Have a round of Never Have I Ever and spill your most embarrassing secrets. The guaranteed way to ensure everyone present wakes up with the fear the next day. But if they're not up for it, accept it, move on and ask someone else don't be a mog.

Find a friendly looking stranger and ask them to take a selfie with you. We're a friendly bunch even when stone cold sober, so this one should be no problem. You'll likely be a little wobbly at this point of the trip, so watch yourself! The Rules of Success the A to Z of Lifelaws by Roger Kilroy. Raise your pint up high in salute to your fellow revellers- and keep it there the entire time, lowering it only to take a drink from your glass. Roger Kilroys most popular book is Graffiti The Scrawl Of The Wild And Other Tales From The.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed